Upload folder using huggingface_hub

Browse files- README.md +209 -0

- added_tokens.json +33 -0

- config.json +225 -0

- configuration_intern_vit.py +120 -0

- configuration_internvl_chat.py +97 -0

- conversation.py +391 -0

- examples/image.png +0 -0

- generation_config.json +4 -0

- merges.txt +0 -0

- model-00001-of-00004.safetensors +3 -0

- model-00002-of-00004.safetensors +3 -0

- model-00003-of-00004.safetensors +3 -0

- model-00004-of-00004.safetensors +3 -0

- model.safetensors.index.json +692 -0

- modeling_intern_vit.py +431 -0

- modeling_internvl_chat.py +564 -0

- preprocessor_config.json +19 -0

- special_tokens_map.json +31 -0

- tokenizer.json +0 -0

- tokenizer_config.json +281 -0

- vocab.json +0 -0

README.md

ADDED

|

@@ -0,0 +1,209 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: mit

|

| 3 |

+

pipeline_tag: image-text-to-text

|

| 4 |

+

library_name: transformers

|

| 5 |

+

base_model:

|

| 6 |

+

- OpenGVLab/InternVL2_5-8B

|

| 7 |

+

- OpenGVLab/InternVL2_5-8B-MPO

|

| 8 |

+

base_model_relation: finetune

|

| 9 |

+

datasets:

|

| 10 |

+

- OpenGVLab/MMPR-v1.2

|

| 11 |

+

- OpenGVLab/VisualPRM400K-v1.1

|

| 12 |

+

language:

|

| 13 |

+

- multilingual

|

| 14 |

+

tags:

|

| 15 |

+

- internvl

|

| 16 |

+

- custom_code

|

| 17 |

+

---

|

| 18 |

+

|

| 19 |

+

# VisualPRM-8B-v1.1

|

| 20 |

+

|

| 21 |

+

[\[📂 GitHub\]](https://github.com/OpenGVLab/InternVL)

|

| 22 |

+

[\[📜 Paper\]](https://arxiv.org/abs/2503.10291)

|

| 23 |

+

[\[🆕 Blog\]](https://internvl.github.io/blog/2025-03-13-VisualPRM/)

|

| 24 |

+

[\[🤗 model\]](https://huggingface.co/OpenGVLab/VisualPRM-8B-v1.1)

|

| 25 |

+

[\[🤗 dataset\]](https://huggingface.co/datasets/OpenGVLab/VisualPRM400K)

|

| 26 |

+

[\[🤗 benchmark\]](https://huggingface.co/datasets/OpenGVLab/VisualProcessBench)

|

| 27 |

+

|

| 28 |

+

***This is a newer version of [VisualPRM-8B](https://huggingface.co/OpenGVLab/VisualPRM-8B), which exhibits superior performance compared to [VisualPRM-8B](https://huggingface.co/OpenGVLab/VisualPRM-8B).***

|

| 29 |

+

|

| 30 |

+

## Introduction

|

| 31 |

+

|

| 32 |

+

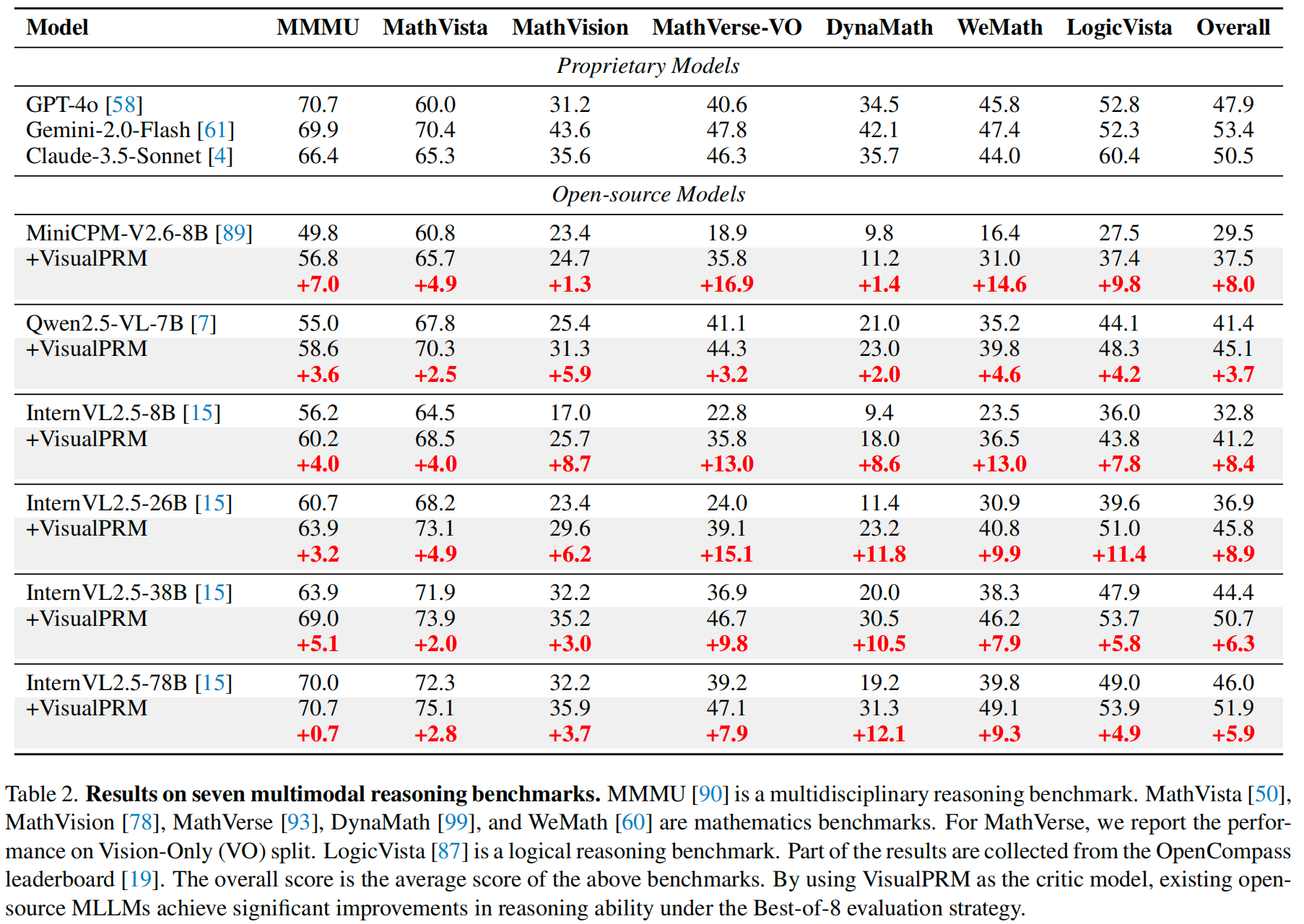

We introduce VisualPRM, an advanced multimodal Process Reward Model (PRM) with 8B parameters, which improves the reasoning abilities of existing Multimodal Large Language Models (MLLMs) across different model scales and families with Best-of-N (BoN) evaluation strategies. **Specifically, our model improves the reasoning performance of three types of MLLMs and four different model scales. Even when applied to the highly capable InternVL2.5-78B, it achieves a 5.9-point improvement across seven multimodal reasoning benchmarks.** Experimental results show that our model exhibits superior performance compared to Outcome Reward Models and Self-Consistency during BoN evaluation. To facilitate the training of multimodal PRMs, we construct a multimodal process supervision dataset VisualPRM400K using an automated data pipeline. For the evaluation of multimodal PRMs, we propose VisualProcessBench, a benchmark with human-annotated step-wise correctness labels, to measure the abilities of PRMs to detect erroneous steps in multimodal reasoning tasks. We hope that our work can inspire more future research and contribute to the development of MLLMs.

|

| 33 |

+

|

| 34 |

+

|

| 35 |

+

|

| 36 |

+

## Performance

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

## Inference with Transformers

|

| 43 |

+

|

| 44 |

+

```python

|

| 45 |

+

import torch

|

| 46 |

+

import torchvision.transforms as T

|

| 47 |

+

|

| 48 |

+

from PIL import Image

|

| 49 |

+

from transformers import AutoModel, AutoTokenizer

|

| 50 |

+

from torchvision.transforms.functional import InterpolationMode

|

| 51 |

+

|

| 52 |

+

IMAGENET_MEAN = (0.485, 0.456, 0.406)

|

| 53 |

+

IMAGENET_STD = (0.229, 0.224, 0.225)

|

| 54 |

+

|

| 55 |

+

def build_transform(input_size):

|

| 56 |

+

MEAN, STD = IMAGENET_MEAN, IMAGENET_STD

|

| 57 |

+

transform = T.Compose([

|

| 58 |

+

T.Lambda(lambda img: img.convert('RGB') if img.mode != 'RGB' else img),

|

| 59 |

+

T.Resize((input_size, input_size), interpolation=InterpolationMode.BICUBIC),

|

| 60 |

+

T.ToTensor(),

|

| 61 |

+

T.Normalize(mean=MEAN, std=STD)

|

| 62 |

+

])

|

| 63 |

+

return transform

|

| 64 |

+

|

| 65 |

+

def find_closest_aspect_ratio(aspect_ratio, target_ratios, width, height, image_size):

|

| 66 |

+

best_ratio_diff = float('inf')

|

| 67 |

+

best_ratio = (1, 1)

|

| 68 |

+

area = width * height

|

| 69 |

+

for ratio in target_ratios:

|

| 70 |

+

target_aspect_ratio = ratio[0] / ratio[1]

|

| 71 |

+

ratio_diff = abs(aspect_ratio - target_aspect_ratio)

|

| 72 |

+

if ratio_diff < best_ratio_diff:

|

| 73 |

+

best_ratio_diff = ratio_diff

|

| 74 |

+

best_ratio = ratio

|

| 75 |

+

elif ratio_diff == best_ratio_diff:

|

| 76 |

+

if area > 0.5 * image_size * image_size * ratio[0] * ratio[1]:

|

| 77 |

+

best_ratio = ratio

|

| 78 |

+

return best_ratio

|

| 79 |

+

|

| 80 |

+

def dynamic_preprocess(image, min_num=1, max_num=12, image_size=448, use_thumbnail=False):

|

| 81 |

+

orig_width, orig_height = image.size

|

| 82 |

+

aspect_ratio = orig_width / orig_height

|

| 83 |

+

|

| 84 |

+

# calculate the existing image aspect ratio

|

| 85 |

+

target_ratios = set(

|

| 86 |

+

(i, j) for n in range(min_num, max_num + 1) for i in range(1, n + 1) for j in range(1, n + 1) if

|

| 87 |

+

i * j <= max_num and i * j >= min_num)

|

| 88 |

+

target_ratios = sorted(target_ratios, key=lambda x: x[0] * x[1])

|

| 89 |

+

|

| 90 |

+

# find the closest aspect ratio to the target

|

| 91 |

+

target_aspect_ratio = find_closest_aspect_ratio(

|

| 92 |

+

aspect_ratio, target_ratios, orig_width, orig_height, image_size)

|

| 93 |

+

|

| 94 |

+

# calculate the target width and height

|

| 95 |

+

target_width = image_size * target_aspect_ratio[0]

|

| 96 |

+

target_height = image_size * target_aspect_ratio[1]

|

| 97 |

+

blocks = target_aspect_ratio[0] * target_aspect_ratio[1]

|

| 98 |

+

|

| 99 |

+

# resize the image

|

| 100 |

+

resized_img = image.resize((target_width, target_height))

|

| 101 |

+

processed_images = []

|

| 102 |

+

for i in range(blocks):

|

| 103 |

+

box = (

|

| 104 |

+

(i % (target_width // image_size)) * image_size,

|

| 105 |

+

(i // (target_width // image_size)) * image_size,

|

| 106 |

+

((i % (target_width // image_size)) + 1) * image_size,

|

| 107 |

+

((i // (target_width // image_size)) + 1) * image_size

|

| 108 |

+

)

|

| 109 |

+

# split the image

|

| 110 |

+

split_img = resized_img.crop(box)

|

| 111 |

+

processed_images.append(split_img)

|

| 112 |

+

assert len(processed_images) == blocks

|

| 113 |

+

if use_thumbnail and len(processed_images) != 1:

|

| 114 |

+

thumbnail_img = image.resize((image_size, image_size))

|

| 115 |

+

processed_images.append(thumbnail_img)

|

| 116 |

+

return processed_images

|

| 117 |

+

|

| 118 |

+

def load_image(image, input_size=448, max_num=12):

|

| 119 |

+

image = Image.open(image).convert('RGB')

|

| 120 |

+

transform = build_transform(input_size=input_size)

|

| 121 |

+

images = dynamic_preprocess(image, image_size=input_size, use_thumbnail=True, max_num=max_num)

|

| 122 |

+

pixel_values = [transform(image) for image in images]

|

| 123 |

+

pixel_values = torch.stack(pixel_values)

|

| 124 |

+

return pixel_values

|

| 125 |

+

|

| 126 |

+

def main():

|

| 127 |

+

path = 'OpenGVLab/VisualPRM-8B-v1.1'

|

| 128 |

+

tokenizer = AutoTokenizer.from_pretrained(path, trust_remote_code=True, use_fast=False)

|

| 129 |

+

model = AutoModel.from_pretrained(

|

| 130 |

+

path,

|

| 131 |

+

trust_remote_code=True,

|

| 132 |

+

low_cpu_mem_usage=True,

|

| 133 |

+

torch_dtype=torch.bfloat16,

|

| 134 |

+

).eval().cuda()

|

| 135 |

+

|

| 136 |

+

question = 'Find m \\angle 3.'

|

| 137 |

+

|

| 138 |

+

response_a = """

|

| 139 |

+

To find the measure of \\angle 3, we need to use the properties of the angles in the given geometric figure.

|

| 140 |

+

|

| 141 |

+

1. \\angle EDC is a right angle, and it is split into \\angle EDF and \\angle 3.

|

| 142 |

+

|

| 143 |

+

2. We know that \\angle EDF = 39^\\circ because it is labeled in the diagram.

|

| 144 |

+

|

| 145 |

+

3. Since \\angle EDC is 90^\\circ, we can set up the equation for \\angle 3: \\angle EDC = \\angle EDF + \\angle 3

|

| 146 |

+

|

| 147 |

+

4. Substituting the known values, we get: 90^\\circ = 39^\\circ + \\angle 3

|

| 148 |

+

|

| 149 |

+

5. Solving for \\angle 3: \\angle 3 = 90^\\circ - 39^\\circ

|

| 150 |

+

|

| 151 |

+

6. Simplifying the equation: \\angle 3 = 51^\\circ

|

| 152 |

+

|

| 153 |

+

Final answer: 51^\\circ

|

| 154 |

+

""".strip()

|

| 155 |

+

|

| 156 |

+

response_b = """

|

| 157 |

+

To find \\( m \\angle 3 \\), we need to analyze the given geometric figure carefully.

|

| 158 |

+

|

| 159 |

+

1. Recognize that \\( \\angle 3 \\) is part of triangle \\( BCE \\). Since \\( \\angle 1 \\) is given as 48° and \\( \\angle 2 \\) is a right angle (90°), we can use the properties of a right triangle to find \\( \\angle 3 \\).

|

| 160 |

+

|

| 161 |

+

2. The sum of the angles in any triangle is 180°. Therefore, in triangle \\( BCE \\): \[ \\angle 1 + \\angle 2 + \\angle 3 = 180° \]

|

| 162 |

+

|

| 163 |

+

3. Substitute the known values: \[ 48° + 90° + \\angle 3 = 180° \]

|

| 164 |

+

|

| 165 |

+

4. Solve for \\( \\angle 3 \\): \[ \\angle 3 = 180° - 48° - 90° = 42° \]

|

| 166 |

+

|

| 167 |

+

Thus, the measure of \\( \\angle 3 \\) is 42°.

|

| 168 |

+

|

| 169 |

+

Final answer: 42

|

| 170 |

+

""".strip()

|

| 171 |

+

|

| 172 |

+

response_list = [

|

| 173 |

+

response_a,

|

| 174 |

+

response_b,

|

| 175 |

+

]

|

| 176 |

+

image = 'examples/image.png'

|

| 177 |

+

pixel_values = load_image(image).to(torch.bfloat16).cuda()

|

| 178 |

+

|

| 179 |

+

sorted_response_list = model.select_best_response(

|

| 180 |

+

tokenizer=tokenizer,

|

| 181 |

+

question=question,

|

| 182 |

+

response_list=response_list,

|

| 183 |

+

pixel_values=pixel_values,

|

| 184 |

+

return_scores=True,

|

| 185 |

+

)

|

| 186 |

+

|

| 187 |

+

print('Best response:', sorted_response_list[0][0])

|

| 188 |

+

print('Highest score:', sorted_response_list[0][1])

|

| 189 |

+

|

| 190 |

+

if __name__ == '__main__':

|

| 191 |

+

main()

|

| 192 |

+

```

|

| 193 |

+

|

| 194 |

+

## License

|

| 195 |

+

|

| 196 |

+

This project is released under the MIT License. This project uses the pre-trained internlm2_5-7b-chat as a component, which is licensed under the Apache License 2.0.

|

| 197 |

+

|

| 198 |

+

## Citation

|

| 199 |

+

|

| 200 |

+

If you find this project useful in your research, please consider citing:

|

| 201 |

+

|

| 202 |

+

```BibTeX

|

| 203 |

+

@article{wang2025visualprm,

|

| 204 |

+

title={VisualPRM: An Effective Process Reward Model for Multimodal Reasoning},

|

| 205 |

+

author={Wang, Weiyun and Gao, Zhangwei and Chen, Lianjie and Chen, Zhe and Zhu, Jinguo and Zhao, Xiangyu and Liu, Yangzhou and Cao, Yue and Ye, Shenglong and Zhu, Xizhou and others},

|

| 206 |

+

journal={arXiv preprint arXiv:2503.10291},

|

| 207 |

+

year={2025}

|

| 208 |

+

}

|

| 209 |

+

```

|

added_tokens.json

ADDED

|

@@ -0,0 +1,33 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"</box>": 151673,

|

| 3 |

+

"</img>": 151666,

|

| 4 |

+

"</quad>": 151669,

|

| 5 |

+

"</ref>": 151671,

|

| 6 |

+

"</tool_call>": 151658,

|

| 7 |

+

"<IMG_CONTEXT>": 151667,

|

| 8 |

+

"<box>": 151672,

|

| 9 |

+

"<img>": 151665,

|

| 10 |

+

"<quad>": 151668,

|

| 11 |

+

"<ref>": 151670,

|

| 12 |

+

"<tool_call>": 151657,

|

| 13 |

+

"<|box_end|>": 151649,

|

| 14 |

+

"<|box_start|>": 151648,

|

| 15 |

+

"<|endoftext|>": 151643,

|

| 16 |

+

"<|file_sep|>": 151664,

|

| 17 |

+

"<|fim_middle|>": 151660,

|

| 18 |

+

"<|fim_pad|>": 151662,

|

| 19 |

+

"<|fim_prefix|>": 151659,

|

| 20 |

+

"<|fim_suffix|>": 151661,

|

| 21 |

+

"<|im_end|>": 151645,

|

| 22 |

+

"<|im_start|>": 151644,

|

| 23 |

+

"<|image_pad|>": 151655,

|

| 24 |

+

"<|object_ref_end|>": 151647,

|

| 25 |

+

"<|object_ref_start|>": 151646,

|

| 26 |

+

"<|quad_end|>": 151651,

|

| 27 |

+

"<|quad_start|>": 151650,

|

| 28 |

+

"<|repo_name|>": 151663,

|

| 29 |

+

"<|video_pad|>": 151656,

|

| 30 |

+

"<|vision_end|>": 151653,

|

| 31 |

+

"<|vision_pad|>": 151654,

|

| 32 |

+

"<|vision_start|>": 151652

|

| 33 |

+

}

|

config.json

ADDED

|

@@ -0,0 +1,225 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_commit_hash": null,

|

| 3 |

+

"_name_or_path": "/mnt/petrelfs/share_data/wangweiyun/share_internvl/InternVL3-8B-MPO",

|

| 4 |

+

"architectures": [

|

| 5 |

+

"InternVLRewardModel"

|

| 6 |

+

],

|

| 7 |

+

"auto_map": {

|

| 8 |

+

"AutoConfig": "configuration_internvl_chat.InternVLChatConfig",

|

| 9 |

+

"AutoModel": "modeling_internvl_chat.InternVLRewardModel",

|

| 10 |

+

"AutoModelForCausalLM": "modeling_internvl_chat.InternVLRewardModel"

|

| 11 |

+

},

|

| 12 |

+

"downsample_ratio": 0.5,

|

| 13 |

+

"dynamic_image_size": true,

|

| 14 |

+

"force_image_size": 448,

|

| 15 |

+

"hidden_size": 3584,

|

| 16 |

+

"image_fold": null,

|

| 17 |

+

"llm_config": {

|

| 18 |

+

"_attn_implementation_autoset": true,

|

| 19 |

+

"_name_or_path": "./pretrained/Qwen2.5-32B-Instruct",

|

| 20 |

+

"add_cross_attention": false,

|

| 21 |

+

"architectures": [

|

| 22 |

+

"Qwen2ForCausalLM"

|

| 23 |

+

],

|

| 24 |

+

"attention_dropout": 0.0,

|

| 25 |

+

"attn_implementation": "flash_attention_2",

|

| 26 |

+

"bad_words_ids": null,

|

| 27 |

+

"begin_suppress_tokens": null,

|

| 28 |

+

"bos_token_id": 151643,

|

| 29 |

+

"chunk_size_feed_forward": 0,

|

| 30 |

+

"cross_attention_hidden_size": null,

|

| 31 |

+

"decoder_start_token_id": null,

|

| 32 |

+

"diversity_penalty": 0.0,

|

| 33 |

+

"do_sample": false,

|

| 34 |

+

"early_stopping": false,

|

| 35 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 36 |

+

"eos_token_id": 151643,

|

| 37 |

+

"exponential_decay_length_penalty": null,

|

| 38 |

+

"finetuning_task": null,

|

| 39 |

+

"forced_bos_token_id": null,

|

| 40 |

+

"forced_eos_token_id": null,

|

| 41 |

+

"hidden_act": "silu",

|

| 42 |

+

"hidden_size": 3584,

|

| 43 |

+

"id2label": {

|

| 44 |

+

"0": "LABEL_0",

|

| 45 |

+

"1": "LABEL_1"

|

| 46 |

+

},

|

| 47 |

+

"initializer_range": 0.02,

|

| 48 |

+

"intermediate_size": 18944,

|

| 49 |

+

"is_decoder": false,

|

| 50 |

+

"is_encoder_decoder": false,

|

| 51 |

+

"label2id": {

|

| 52 |

+

"LABEL_0": 0,

|

| 53 |

+

"LABEL_1": 1

|

| 54 |

+

},

|

| 55 |

+

"length_penalty": 1.0,

|

| 56 |

+

"max_length": 20,

|

| 57 |

+

"max_position_embeddings": 32768,

|

| 58 |

+

"max_window_layers": 70,

|

| 59 |

+

"min_length": 0,

|

| 60 |

+

"model_type": "qwen2",

|

| 61 |

+

"moe_config": null,

|

| 62 |

+

"no_repeat_ngram_size": 0,

|

| 63 |

+

"num_attention_heads": 28,

|

| 64 |

+

"num_beam_groups": 1,

|

| 65 |

+

"num_beams": 1,

|

| 66 |

+

"num_hidden_layers": 28,

|

| 67 |

+

"num_key_value_heads": 4,

|

| 68 |

+

"num_return_sequences": 1,

|

| 69 |

+

"output_attentions": false,

|

| 70 |

+

"output_hidden_states": false,

|

| 71 |

+

"output_scores": false,

|

| 72 |

+

"pad_token_id": null,

|

| 73 |

+

"prefix": null,

|

| 74 |

+

"problem_type": null,

|

| 75 |

+

"pruned_heads": {},

|

| 76 |

+

"remove_invalid_values": false,

|

| 77 |

+

"repetition_penalty": 1.0,

|

| 78 |

+

"return_dict": true,

|

| 79 |

+

"return_dict_in_generate": false,

|

| 80 |

+

"rms_norm_eps": 1e-06,

|

| 81 |

+

"rope_scaling": {

|

| 82 |

+

"factor": 2.0,

|

| 83 |

+

"rope_type": "dynamic",

|

| 84 |

+

"type": "dynamic"

|

| 85 |

+

},

|

| 86 |

+

"rope_theta": 1000000.0,

|

| 87 |

+

"sep_token_id": null,

|

| 88 |

+

"sliding_window": null,

|

| 89 |

+

"suppress_tokens": null,

|

| 90 |

+

"task_specific_params": null,

|

| 91 |

+

"temperature": 1.0,

|

| 92 |

+

"tf_legacy_loss": false,

|

| 93 |

+

"tie_encoder_decoder": false,

|

| 94 |

+

"tie_word_embeddings": false,

|

| 95 |

+

"tokenizer_class": null,

|

| 96 |

+

"top_k": 50,

|

| 97 |

+

"top_p": 1.0,

|

| 98 |

+

"torch_dtype": "bfloat16",

|

| 99 |

+

"torchscript": false,

|

| 100 |

+

"transformers_version": "4.37.2",

|

| 101 |

+

"typical_p": 1.0,

|

| 102 |

+

"use_bfloat16": true,

|

| 103 |

+

"use_cache": false,

|

| 104 |

+

"use_sliding_window": false,

|

| 105 |

+

"vocab_size": 151674

|

| 106 |

+

},

|

| 107 |

+

"max_dynamic_patch": 12,

|

| 108 |

+

"min_dynamic_patch": 1,

|

| 109 |

+

"model_type": "internvl_chat",

|

| 110 |

+

"pad2square": false,

|

| 111 |

+

"ps_version": "v2",

|

| 112 |

+

"select_layer": -1,

|

| 113 |

+

"system_message": null,

|

| 114 |

+

"template": "internvl2_5",

|

| 115 |

+

"tie_word_embeddings": false,

|

| 116 |

+

"torch_dtype": "bfloat16",

|

| 117 |

+

"transformers_version": null,

|

| 118 |

+

"use_backbone_lora": 0,

|

| 119 |

+

"use_llm_lora": 0,

|

| 120 |

+

"use_thumbnail": true,

|

| 121 |

+

"vision_config": {

|

| 122 |

+

"_attn_implementation_autoset": true,

|

| 123 |

+

"_name_or_path": "OpenGVLab/InternViT-6B-448px-V1-5",

|

| 124 |

+

"add_cross_attention": false,

|

| 125 |

+

"architectures": [

|

| 126 |

+

"InternVisionModel"

|

| 127 |

+

],

|

| 128 |

+

"attention_dropout": 0.0,

|

| 129 |

+

"auto_map": {

|

| 130 |

+

"AutoConfig": "configuration_intern_vit.InternVisionConfig",

|

| 131 |

+

"AutoModel": "modeling_intern_vit.InternVisionModel"

|

| 132 |

+

},

|

| 133 |

+

"bad_words_ids": null,

|

| 134 |

+

"begin_suppress_tokens": null,

|

| 135 |

+

"bos_token_id": null,

|

| 136 |

+

"capacity_factor": 1.2,

|

| 137 |

+

"chunk_size_feed_forward": 0,

|

| 138 |

+

"cross_attention_hidden_size": null,

|

| 139 |

+

"decoder_start_token_id": null,

|

| 140 |

+

"diversity_penalty": 0.0,

|

| 141 |

+

"do_sample": false,

|

| 142 |

+

"drop_path_rate": 0.1,

|

| 143 |

+

"dropout": 0.0,

|

| 144 |

+

"early_stopping": false,

|

| 145 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 146 |

+

"eos_token_id": null,

|

| 147 |

+

"eval_capacity_factor": 1.4,

|

| 148 |

+

"exponential_decay_length_penalty": null,

|

| 149 |

+

"finetuning_task": null,

|

| 150 |

+

"forced_bos_token_id": null,

|

| 151 |

+

"forced_eos_token_id": null,

|

| 152 |

+

"hidden_act": "gelu",

|

| 153 |

+

"hidden_size": 1024,

|

| 154 |

+

"id2label": {

|

| 155 |

+

"0": "LABEL_0",

|

| 156 |

+

"1": "LABEL_1"

|

| 157 |

+

},

|

| 158 |

+

"image_size": 448,

|

| 159 |

+

"initializer_factor": 0.1,

|

| 160 |

+

"initializer_range": 1e-10,

|

| 161 |

+

"intermediate_size": 4096,

|

| 162 |

+

"is_decoder": false,

|

| 163 |

+

"is_encoder_decoder": false,

|

| 164 |

+

"label2id": {

|

| 165 |

+

"LABEL_0": 0,

|

| 166 |

+

"LABEL_1": 1

|

| 167 |

+

},

|

| 168 |

+

"laux_allreduce": "all_nodes",

|

| 169 |

+

"layer_norm_eps": 1e-06,

|

| 170 |

+

"length_penalty": 1.0,

|

| 171 |

+

"max_length": 20,

|

| 172 |

+

"min_length": 0,

|

| 173 |

+

"model_type": "intern_vit_6b",

|

| 174 |

+

"moe_coeff_ratio": 0.5,

|

| 175 |

+

"moe_intermediate_size": 768,

|

| 176 |

+

"moe_output_scale": 4.0,

|

| 177 |

+

"no_repeat_ngram_size": 0,

|

| 178 |

+

"noisy_gate_policy": "RSample_before",

|

| 179 |

+

"norm_type": "layer_norm",

|

| 180 |

+

"num_attention_heads": 16,

|

| 181 |

+

"num_beam_groups": 1,

|

| 182 |

+

"num_beams": 1,

|

| 183 |

+

"num_channels": 3,

|

| 184 |

+

"num_experts": 8,

|

| 185 |

+

"num_hidden_layers": 24,

|

| 186 |

+

"num_return_sequences": 1,

|

| 187 |

+

"num_routed_experts": 4,

|

| 188 |

+

"num_shared_experts": 4,

|

| 189 |

+

"output_attentions": false,

|

| 190 |

+

"output_hidden_states": false,

|

| 191 |

+

"output_scores": false,

|

| 192 |

+

"pad_token_id": null,

|

| 193 |

+

"patch_size": 14,

|

| 194 |

+

"prefix": null,

|

| 195 |

+

"problem_type": null,

|

| 196 |

+

"pruned_heads": {},

|

| 197 |

+

"qk_normalization": false,

|

| 198 |

+

"qkv_bias": true,

|

| 199 |

+

"remove_invalid_values": false,

|

| 200 |

+

"repetition_penalty": 1.0,

|

| 201 |

+

"return_dict": true,

|

| 202 |

+

"return_dict_in_generate": false,

|

| 203 |

+

"sep_token_id": null,

|

| 204 |

+

"shared_expert_intermediate_size": 3072,

|

| 205 |

+

"suppress_tokens": null,

|

| 206 |

+

"task_specific_params": null,

|

| 207 |

+

"temperature": 1.0,

|

| 208 |

+

"tf_legacy_loss": false,

|

| 209 |

+

"tie_encoder_decoder": false,

|

| 210 |

+

"tie_word_embeddings": true,

|

| 211 |

+

"tokenizer_class": null,

|

| 212 |

+

"top_k": 50,

|

| 213 |

+

"top_p": 1.0,

|

| 214 |

+

"torch_dtype": "bfloat16",

|

| 215 |

+

"torchscript": false,

|

| 216 |

+

"transformers_version": "4.37.2",

|

| 217 |

+

"typical_p": 1.0,

|

| 218 |

+

"use_bfloat16": true,

|

| 219 |

+

"use_flash_attn": true,

|

| 220 |

+

"use_moe": false,

|

| 221 |

+

"use_residual": true,

|

| 222 |

+

"use_rts": false,

|

| 223 |

+

"use_weighted_residual": false

|

| 224 |

+

}

|

| 225 |

+

}

|

configuration_intern_vit.py

ADDED

|

@@ -0,0 +1,120 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# --------------------------------------------------------

|

| 2 |

+

# InternVL

|

| 3 |

+

# Copyright (c) 2024 OpenGVLab

|

| 4 |

+

# Licensed under The MIT License [see LICENSE for details]

|

| 5 |

+

# --------------------------------------------------------

|

| 6 |

+

|

| 7 |

+

import os

|

| 8 |

+

from typing import Union

|

| 9 |

+

|

| 10 |

+

from transformers.configuration_utils import PretrainedConfig

|

| 11 |

+

from transformers.utils import logging

|

| 12 |

+

|

| 13 |

+

logger = logging.get_logger(__name__)

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

class InternVisionConfig(PretrainedConfig):

|

| 17 |

+

r"""

|

| 18 |

+

This is the configuration class to store the configuration of a [`InternVisionModel`]. It is used to

|

| 19 |

+

instantiate a vision encoder according to the specified arguments, defining the model architecture.

|

| 20 |

+

|

| 21 |

+

Configuration objects inherit from [`PretrainedConfig`] and can be used to control the model outputs. Read the

|

| 22 |

+

documentation from [`PretrainedConfig`] for more information.

|

| 23 |

+

|

| 24 |

+

Args:

|

| 25 |

+

num_channels (`int`, *optional*, defaults to 3):

|

| 26 |

+

Number of color channels in the input images (e.g., 3 for RGB).

|

| 27 |

+

patch_size (`int`, *optional*, defaults to 14):

|

| 28 |

+

The size (resolution) of each patch.

|

| 29 |

+

image_size (`int`, *optional*, defaults to 224):

|

| 30 |

+

The size (resolution) of each image.

|

| 31 |

+

qkv_bias (`bool`, *optional*, defaults to `False`):

|

| 32 |

+

Whether to add a bias to the queries and values in the self-attention layers.

|

| 33 |

+

hidden_size (`int`, *optional*, defaults to 3200):

|

| 34 |

+

Dimensionality of the encoder layers and the pooler layer.

|

| 35 |

+

num_attention_heads (`int`, *optional*, defaults to 25):

|

| 36 |

+

Number of attention heads for each attention layer in the Transformer encoder.

|

| 37 |

+

intermediate_size (`int`, *optional*, defaults to 12800):

|

| 38 |

+

Dimensionality of the "intermediate" (i.e., feed-forward) layer in the Transformer encoder.

|

| 39 |

+

qk_normalization (`bool`, *optional*, defaults to `True`):

|

| 40 |

+

Whether to normalize the queries and keys in the self-attention layers.

|

| 41 |

+

num_hidden_layers (`int`, *optional*, defaults to 48):

|

| 42 |

+

Number of hidden layers in the Transformer encoder.

|

| 43 |

+

use_flash_attn (`bool`, *optional*, defaults to `True`):

|

| 44 |

+

Whether to use flash attention mechanism.

|

| 45 |

+

hidden_act (`str` or `function`, *optional*, defaults to `"gelu"`):

|

| 46 |

+

The non-linear activation function (function or string) in the encoder and pooler. If string, `"gelu"`,

|

| 47 |

+

`"relu"`, `"selu"` and `"gelu_new"` ``"gelu"` are supported.

|

| 48 |

+

layer_norm_eps (`float`, *optional*, defaults to 1e-6):

|

| 49 |

+

The epsilon used by the layer normalization layers.

|

| 50 |

+

dropout (`float`, *optional*, defaults to 0.0):

|

| 51 |

+

The dropout probability for all fully connected layers in the embeddings, encoder, and pooler.

|

| 52 |

+

drop_path_rate (`float`, *optional*, defaults to 0.0):

|

| 53 |

+

Dropout rate for stochastic depth.

|

| 54 |

+

attention_dropout (`float`, *optional*, defaults to 0.0):

|

| 55 |

+

The dropout ratio for the attention probabilities.

|

| 56 |

+

initializer_range (`float`, *optional*, defaults to 0.02):

|

| 57 |

+

The standard deviation of the truncated_normal_initializer for initializing all weight matrices.

|

| 58 |

+

initializer_factor (`float`, *optional*, defaults to 0.1):

|

| 59 |

+

A factor for layer scale.

|

| 60 |

+

"""

|

| 61 |

+

|

| 62 |

+

model_type = 'intern_vit_6b'

|

| 63 |

+

|

| 64 |

+

def __init__(

|

| 65 |

+

self,

|

| 66 |

+

num_channels=3,

|

| 67 |

+

patch_size=14,

|

| 68 |

+

image_size=224,

|

| 69 |

+

qkv_bias=False,

|

| 70 |

+

hidden_size=3200,

|

| 71 |

+

num_attention_heads=25,

|

| 72 |

+

intermediate_size=12800,

|

| 73 |

+

qk_normalization=True,

|

| 74 |

+

num_hidden_layers=48,

|

| 75 |

+

use_flash_attn=True,

|

| 76 |

+

hidden_act='gelu',

|

| 77 |

+

norm_type='rms_norm',

|

| 78 |

+

layer_norm_eps=1e-6,

|

| 79 |

+

dropout=0.0,

|

| 80 |

+

drop_path_rate=0.0,

|

| 81 |

+

attention_dropout=0.0,

|

| 82 |

+

initializer_range=0.02,

|

| 83 |

+

initializer_factor=0.1,

|

| 84 |

+

**kwargs,

|

| 85 |

+

):

|

| 86 |

+

super().__init__(**kwargs)

|

| 87 |

+

|

| 88 |

+

self.hidden_size = hidden_size

|

| 89 |

+

self.intermediate_size = intermediate_size

|

| 90 |

+

self.dropout = dropout

|

| 91 |

+

self.drop_path_rate = drop_path_rate

|

| 92 |

+

self.num_hidden_layers = num_hidden_layers

|

| 93 |

+

self.num_attention_heads = num_attention_heads

|

| 94 |

+

self.num_channels = num_channels

|

| 95 |

+

self.patch_size = patch_size

|

| 96 |

+

self.image_size = image_size

|

| 97 |

+

self.initializer_range = initializer_range

|

| 98 |

+

self.initializer_factor = initializer_factor

|

| 99 |

+

self.attention_dropout = attention_dropout

|

| 100 |

+

self.layer_norm_eps = layer_norm_eps

|

| 101 |

+

self.hidden_act = hidden_act

|

| 102 |

+

self.norm_type = norm_type

|

| 103 |

+

self.qkv_bias = qkv_bias

|

| 104 |

+

self.qk_normalization = qk_normalization

|

| 105 |

+

self.use_flash_attn = use_flash_attn

|

| 106 |

+

|

| 107 |

+

@classmethod

|

| 108 |

+

def from_pretrained(cls, pretrained_model_name_or_path: Union[str, os.PathLike], **kwargs) -> 'PretrainedConfig':

|

| 109 |

+

config_dict, kwargs = cls.get_config_dict(pretrained_model_name_or_path, **kwargs)

|

| 110 |

+

|

| 111 |

+

if 'vision_config' in config_dict:

|

| 112 |

+

config_dict = config_dict['vision_config']

|

| 113 |

+

|

| 114 |

+

if 'model_type' in config_dict and hasattr(cls, 'model_type') and config_dict['model_type'] != cls.model_type:

|

| 115 |

+

logger.warning(

|

| 116 |

+

f"You are using a model of type {config_dict['model_type']} to instantiate a model of type "

|

| 117 |

+

f'{cls.model_type}. This is not supported for all configurations of models and can yield errors.'

|

| 118 |

+

)

|

| 119 |

+

|

| 120 |

+

return cls.from_dict(config_dict, **kwargs)

|

configuration_internvl_chat.py

ADDED

|

@@ -0,0 +1,97 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# --------------------------------------------------------

|

| 2 |

+

# InternVL

|

| 3 |

+

# Copyright (c) 2024 OpenGVLab

|

| 4 |

+

# Licensed under The MIT License [see LICENSE for details]

|

| 5 |

+

# --------------------------------------------------------

|

| 6 |

+

|

| 7 |

+

import copy

|

| 8 |

+

|

| 9 |

+

from transformers import AutoConfig, LlamaConfig, Qwen2Config

|

| 10 |

+

from transformers.configuration_utils import PretrainedConfig

|

| 11 |

+

from transformers.utils import logging

|

| 12 |

+

|

| 13 |

+

from .configuration_intern_vit import InternVisionConfig

|

| 14 |

+

|

| 15 |

+

logger = logging.get_logger(__name__)

|

| 16 |

+

|

| 17 |

+

|

| 18 |

+

class InternVLChatConfig(PretrainedConfig):

|

| 19 |

+

model_type = 'internvl_chat'

|

| 20 |

+

is_composition = True

|

| 21 |

+

|

| 22 |

+

def __init__(

|

| 23 |

+

self,

|

| 24 |

+

vision_config=None,

|

| 25 |

+

llm_config=None,

|

| 26 |

+

use_backbone_lora=0,

|

| 27 |

+

use_llm_lora=0,

|

| 28 |

+

select_layer=-1,

|

| 29 |

+

force_image_size=None,

|

| 30 |

+

downsample_ratio=0.5,

|

| 31 |

+

template=None,

|

| 32 |

+

dynamic_image_size=False,

|

| 33 |

+

use_thumbnail=False,

|

| 34 |

+

ps_version='v1',

|

| 35 |

+

min_dynamic_patch=1,

|

| 36 |

+

max_dynamic_patch=6,

|

| 37 |

+

**kwargs):

|

| 38 |

+

super().__init__(**kwargs)

|

| 39 |

+

|

| 40 |

+

if vision_config is None:

|

| 41 |

+

vision_config = {'architectures': ['InternVisionModel']}

|

| 42 |

+

logger.info('vision_config is None. Initializing the InternVisionConfig with default values.')

|

| 43 |

+

|

| 44 |

+

if llm_config is None:

|

| 45 |

+

llm_config = {'architectures': ['Qwen2ForCausalLM']}

|

| 46 |

+

logger.info('llm_config is None. Initializing the LlamaConfig config with default values (`LlamaConfig`).')

|

| 47 |

+

|

| 48 |

+

self.vision_config = InternVisionConfig(**vision_config)

|

| 49 |

+

if llm_config.get('architectures')[0] == 'LlamaForCausalLM':

|

| 50 |

+

self.llm_config = LlamaConfig(**llm_config)

|

| 51 |

+

elif llm_config.get('architectures')[0] == 'Qwen2ForCausalLM':

|

| 52 |

+

self.llm_config = Qwen2Config(**llm_config)

|

| 53 |

+

else:

|

| 54 |

+

raise ValueError('Unsupported architecture: {}'.format(llm_config.get('architectures')[0]))

|

| 55 |

+

self.use_backbone_lora = use_backbone_lora

|

| 56 |

+

self.use_llm_lora = use_llm_lora

|

| 57 |

+

self.select_layer = select_layer

|

| 58 |

+

self.force_image_size = force_image_size

|

| 59 |

+

self.downsample_ratio = downsample_ratio

|

| 60 |

+

self.template = template

|

| 61 |

+

self.dynamic_image_size = dynamic_image_size

|

| 62 |

+

self.use_thumbnail = use_thumbnail

|

| 63 |

+

self.ps_version = ps_version # pixel shuffle version

|

| 64 |

+

self.min_dynamic_patch = min_dynamic_patch

|

| 65 |

+

self.max_dynamic_patch = max_dynamic_patch

|

| 66 |

+

# By default, we use tie_word_embeddings=False for models of all sizes.

|

| 67 |

+

self.tie_word_embeddings = self.llm_config.tie_word_embeddings

|

| 68 |

+

|

| 69 |

+

logger.info(f'vision_select_layer: {self.select_layer}')

|

| 70 |

+

logger.info(f'ps_version: {self.ps_version}')

|

| 71 |

+

logger.info(f'min_dynamic_patch: {self.min_dynamic_patch}')

|

| 72 |

+

logger.info(f'max_dynamic_patch: {self.max_dynamic_patch}')

|

| 73 |

+

|

| 74 |

+

def to_dict(self):

|

| 75 |

+

"""

|

| 76 |

+

Serializes this instance to a Python dictionary. Override the default [`~PretrainedConfig.to_dict`].

|

| 77 |

+

|

| 78 |

+

Returns:

|

| 79 |

+

`Dict[str, any]`: Dictionary of all the attributes that make up this configuration instance,

|

| 80 |

+

"""

|

| 81 |

+

output = copy.deepcopy(self.__dict__)

|

| 82 |

+

output['vision_config'] = self.vision_config.to_dict()

|

| 83 |

+

output['llm_config'] = self.llm_config.to_dict()

|

| 84 |

+

output['model_type'] = self.__class__.model_type

|

| 85 |

+

output['use_backbone_lora'] = self.use_backbone_lora

|

| 86 |

+

output['use_llm_lora'] = self.use_llm_lora

|

| 87 |

+

output['select_layer'] = self.select_layer

|

| 88 |

+

output['force_image_size'] = self.force_image_size

|

| 89 |

+

output['downsample_ratio'] = self.downsample_ratio

|

| 90 |

+

output['template'] = self.template

|

| 91 |

+

output['dynamic_image_size'] = self.dynamic_image_size

|

| 92 |

+

output['use_thumbnail'] = self.use_thumbnail

|

| 93 |

+

output['ps_version'] = self.ps_version

|

| 94 |

+

output['min_dynamic_patch'] = self.min_dynamic_patch

|

| 95 |

+

output['max_dynamic_patch'] = self.max_dynamic_patch

|

| 96 |

+

|

| 97 |

+

return output

|

conversation.py

ADDED

|

@@ -0,0 +1,391 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

"""

|

| 2 |

+

Conversation prompt templates.

|

| 3 |

+

|

| 4 |

+

We kindly request that you import fastchat instead of copying this file if you wish to use it.

|

| 5 |

+

If you have changes in mind, please contribute back so the community can benefit collectively and continue to maintain these valuable templates.

|

| 6 |

+

|

| 7 |

+

Modified from https://github.com/lm-sys/FastChat/blob/main/fastchat/conversation.py

|

| 8 |

+

"""

|

| 9 |

+

|

| 10 |

+

import dataclasses

|

| 11 |

+

from enum import IntEnum, auto

|

| 12 |

+

from typing import Dict, List, Tuple, Union

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

class SeparatorStyle(IntEnum):

|

| 16 |

+

"""Separator styles."""

|

| 17 |

+

|

| 18 |

+

ADD_COLON_SINGLE = auto()

|

| 19 |

+

ADD_COLON_TWO = auto()

|

| 20 |

+

ADD_COLON_SPACE_SINGLE = auto()

|

| 21 |

+

NO_COLON_SINGLE = auto()

|

| 22 |

+

NO_COLON_TWO = auto()

|

| 23 |

+

ADD_NEW_LINE_SINGLE = auto()

|

| 24 |

+

LLAMA2 = auto()

|

| 25 |

+

CHATGLM = auto()

|

| 26 |

+

CHATML = auto()

|

| 27 |

+

CHATINTERN = auto()

|

| 28 |

+

DOLLY = auto()

|

| 29 |

+

RWKV = auto()

|

| 30 |

+

PHOENIX = auto()

|

| 31 |

+

ROBIN = auto()

|

| 32 |

+

FALCON_CHAT = auto()

|

| 33 |

+

CHATGLM3 = auto()

|

| 34 |

+

INTERNVL_ZH = auto()

|

| 35 |

+

MPT = auto()

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

@dataclasses.dataclass

|

| 39 |

+