Datasets:

tags:

- video

dataset_info:

features:

- name: sample_key

dtype: string

- name: vid0_thumbnail

dtype: image

- name: vid1_thumbnail

dtype: image

- name: videos

dtype: string

- name: action

dtype: string

- name: action_name

dtype: string

- name: action_description

dtype: string

- name: source_dataset

dtype: string

- name: sample_hash

dtype: int64

- name: retrieval_frames

dtype: string

- name: differences_annotated

dtype: string

- name: differences_gt

dtype: string

- name: domain

dtype: string

- name: split

dtype: string

- name: n_differences_open_prediction

dtype: int64

splits:

- name: test

num_bytes: 15219230.154398564

num_examples: 549

download_size: 6445835

dataset_size: 15219230.154398564

configs:

- config_name: default

data_files:

- split: test

path: data/test-*

Dataset card for "VidDiffBench"

This is the dataset / benchmark for Video Action Differencing (ICLR 2025), a new task that compares how an action is performed between two videos. This page introduces the task, the dataset structure, and how to access the data. See the paper for details on dataset construction. The code for running evaluation, and for benchmarking popular LMMs is at https://jmhb0.github.io/viddiff.

@inproceedings{burgessvideo,

title={Video Action Differencing},

author={Burgess, James and Wang, Xiaohan and Zhang, Yuhui and Rau, Anita and Lozano, Alejandro and Dunlap, Lisa and Darrell, Trevor and Yeung-Levy, Serena},

booktitle={The Thirteenth International Conference on Learning Representations}

}

The Video Action Differencing task: closed and open evaluation

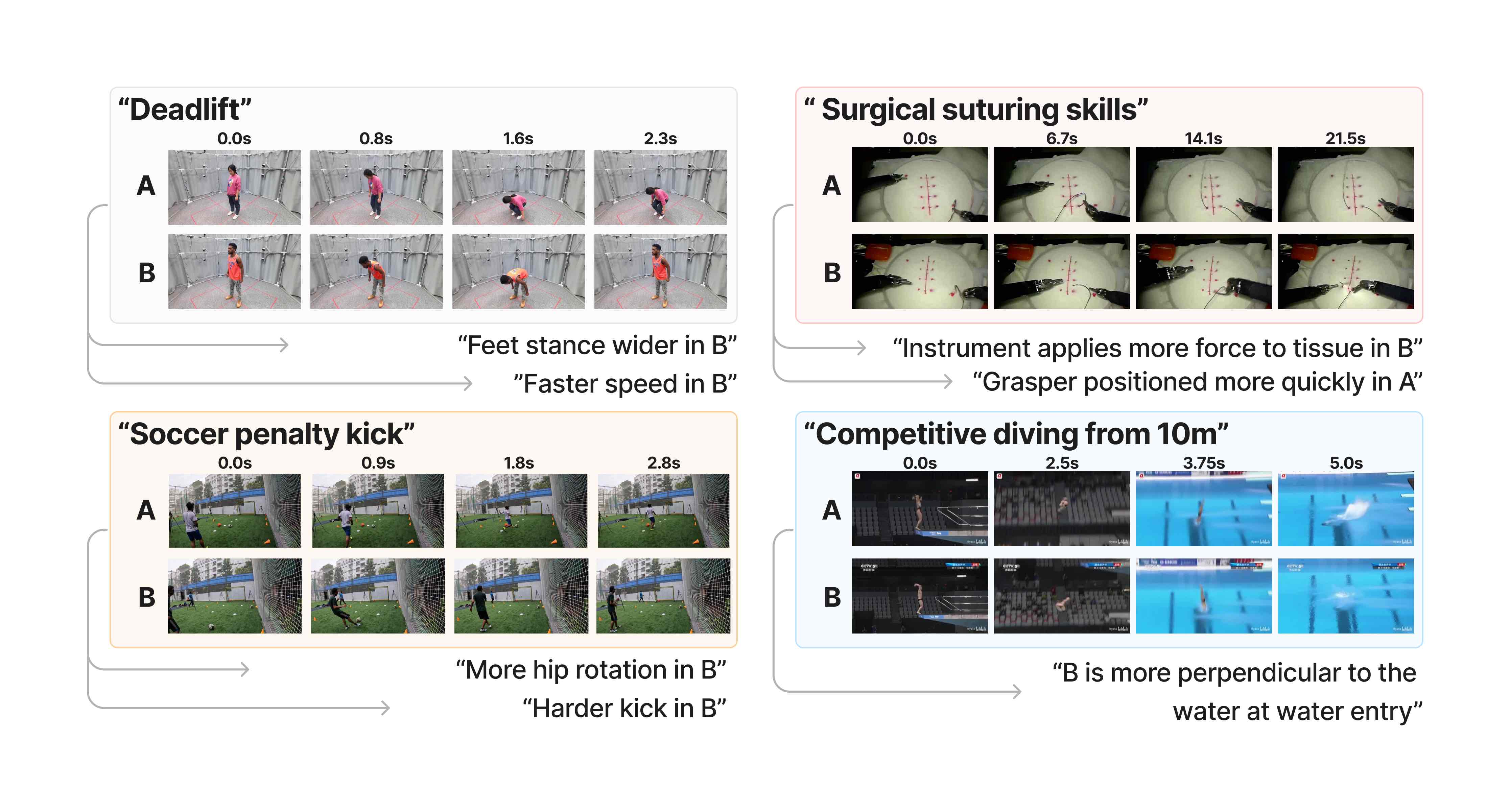

The Video Action Differencing task compares two videos of the same action. The goal is to identify differences in how the action is performed, where the differences are expressed in natural language.

In closed evaluation:

- Input: two videos of the same action, action description string, a list of candidate difference strings.

- Output: for each difference string, either 'a' if the statement applies more to video a, or 'b' if it applies more to video 'b'.

In open evaluation, the model must generate the difference strings:

- Input: two videos of the same action, action description string, a number 'n_differences'.

- Output: a list of difference strings (at most 'n_differences'). For each difference string, 'a' if the statement applies more to video a, or 'b' if it applies more to video 'b'.

Dataset structure

After following the 'getting the data' section: we have dataset as a HuggingFace dataset and videos as a list. For row i: video A is videos[0][i], video B is videos[1][i], and dataset[i] is the annotation for the difference between the videos.

The videos:

videos[0][i]['video']and is a numpy array with shape(nframes,H,W,3).videos[0][i]['fps_original']is an int, frames per second.

The annotations in dataset:

sample_keya unique key.videosmetadata about the videos A and B used by the dataloader: the video filename, and the start and end frames.actionaction key like "fitness_2"action_namea short action name, like "deadlift"action_descriptiona longer action description, like "a single free weight deadlift without any weight"source_datasetthe source dataset for the videos (but not annotation), e.g. 'humman' here.splitdifficulty split, one of{'easy', 'medium', 'hard'}n_differences_open_predictionin open evaluation, the max number of difference strings the model is allowed to generate.differences_annotateda dict with the difference strings, e.g:

{

"0": {

"description": "the feet stance is wider",

"name": "feet stance wider",

"num_frames": "1",

},

"1": {

"description": "the speed of hip rotation is faster",

"name": "speed",

"num_frames": "gt_1",

},

"2" : null,

...

- and these keys are:

- the key is the 'key_difference'

descriptionis the 'difference string' (passed as input in closed eval, or the model must generate a semantically similar string in open eval).num_frames(not used) is '1' if an LMM could solve it from a single (well-chosen) frame, or 'gt_1' if more frames are needed.- Some values might be

null. This is because the Huggingface datasets enforces that all elements in a column have the same schema.

differences_gthas the gt label, e.g.{"0": "b", "1":"a", "2":null}. For example, difference "the feet stance is wider" applies more to video B.domainactivity domain. One of{'fitness', 'ballsports', 'diving', 'surgery', 'music'}.

Getting the data

Getting the dataset requires a few steps. We distribute the annotations, but since we don't own the videos, you'll have to download them elsewhere.

Get the annotations

First, get the annotations from the hub like this:

from datasets import load_dataset

repo_name = "jmhb/VidDiffBench"

dataset = load_dataset(repo_name)

Get the videos

We get videos from prior works (which should be cited if you use the benchmark - see the end of this doc).

The source dataset is in the dataset column source_dataset.

First, download some .py files from this repo into your local data/ file.

GIT_LFS_SKIP_SMUDGE=1 git clone [email protected]:datasets/jmhb/VidDiffBench data/

A few datasets let us redistribute videos, so you can download them from this HF repo like this:

python data/download_data.py

If you ONLY need the 'easy' split, you can stop here. The videos includes the source datasets Humann (and 'easy' only draws from this data) and JIGSAWS.

For 'medium' and 'hard' splits, you'll need to download these other datasets from the EgoExo4D and FineDiving. Here's how to do that:

Download EgoExo4d videos

These are needed for 'medium' and 'hard' splits. First Request an access key from the docs (it takes 48hrs). Then follow the instructions to install the CLI download tool egoexo. We only need a small number of these videos, so get the uids list from data/egoexo4d_uids.json and use egoexo to download:

uids=$(jq -r '.[]' data/egoexo4d_uids.json | tr '\n' ' ' | sed 's/ $//')

egoexo -o data/src_EgoExo4D --parts downscaled_takes/448 --uids $uids

Common issue: remember to put your access key into ~/.aws/credentials.

Download FineDiving videos

These are needed for 'medium' split. Follow the instructions in the repo to request access (it takes at least a day), download the whole thing, and set up a link to it:

ln -s <path_to_fitnediving> data/src_FineDiving

Making the final dataset with videos

Install these packages:

pip install numpy Pillow datasets decord lmdb tqdm huggingface_hub

Now run:

from data.load_dataset import load_dataset, load_all_videos

dataset = load_dataset(splits=['easy'], subset_mode="0") # splits are one of {'easy','medium','hard'}

videos = load_all_videos(dataset, cache=True, cache_dir="cache/cache_data")

For row i: video A is videos[0][i], video B is videos[1][i], and dataset[i] is the annotation for the difference between the videos. For video A, the video itself is videos[0][i]['video'] and is a numpy array with shape (nframes,3,H,W); the fps is in videos[0][i]['fps_original'].

By passing the argument cache=True to load_all_videos, we create a cache directory at cache/cache_data/, and save copies of the videos using numpy memmap (total directory size for the whole dataset is 55Gb). Loading the videos and caching will take a few minutes per split (faster for the 'easy' split), and about 25mins for the whole dataset. But on subsequent runs, it should be fast - a few seconds for the whole dataset.

Finally, you can get just subsets, for example setting subset_mode='3_per_action' will take 3 video pairs per action, while subset_mode="0" gets them all.

More dataset info

We have more dataset metadata in this dataset repo:

- Differences taxonomy

data/difference_taxonomy.csv. - Actions and descriptions

data/actions.csv.

License

The annotations and all other non-video metadata is realeased under an MIT license.

The videos retain the license of the original dataset creators, and the source dataset is given in dataset column source_dataset.

- EgoExo4D, license is online at this link

- JIGSAWS release notes at this link

- Humman uses "S-Lab License 1.0" at this link

- FineDiving use this MIT license

Citation

Below is the citation for our paper, and the original source datasets:

@inproceedings{burgessvideo,

title={Video Action Differencing},

author={Burgess, James and Wang, Xiaohan and Zhang, Yuhui and Rau, Anita and Lozano, Alejandro and Dunlap, Lisa and Darrell, Trevor and Yeung-Levy, Serena},

booktitle={The Thirteenth International Conference on Learning Representations}

}

@inproceedings{cai2022humman,

title={{HuMMan}: Multi-modal 4d human dataset for versatile sensing and modeling},

author={Cai, Zhongang and Ren, Daxuan and Zeng, Ailing and Lin, Zhengyu and Yu, Tao and Wang, Wenjia and Fan,

Xiangyu and Gao, Yang and Yu, Yifan and Pan, Liang and Hong, Fangzhou and Zhang, Mingyuan and

Loy, Chen Change and Yang, Lei and Liu, Ziwei},

booktitle={17th European Conference on Computer Vision, Tel Aviv, Israel, October 23--27, 2022,

Proceedings, Part VII},

pages={557--577},

year={2022},

organization={Springer}

}

@inproceedings{parmar2022domain,

title={Domain Knowledge-Informed Self-supervised Representations for Workout Form Assessment},

author={Parmar, Paritosh and Gharat, Amol and Rhodin, Helge},

booktitle={Computer Vision--ECCV 2022: 17th European Conference, Tel Aviv, Israel, October 23--27, 2022, Proceedings, Part XXXVIII},

pages={105--123},

year={2022},

organization={Springer}

}

@inproceedings{grauman2024ego,

title={Ego-exo4d: Understanding skilled human activity from first-and third-person perspectives},

author={Grauman, Kristen and Westbury, Andrew and Torresani, Lorenzo and Kitani, Kris and Malik, Jitendra and Afouras, Triantafyllos and Ashutosh, Kumar and Baiyya, Vijay and Bansal, Siddhant and Boote, Bikram and others},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

pages={19383--19400},

year={2024}

}

@inproceedings{gao2014jhu,

title={Jhu-isi gesture and skill assessment working set (jigsaws): A surgical activity dataset for human motion modeling},

author={Gao, Yixin and Vedula, S Swaroop and Reiley, Carol E and Ahmidi, Narges and Varadarajan, Balakrishnan and Lin, Henry C and Tao, Lingling and Zappella, Luca and B{\'e}jar, Benjam{\i}n and Yuh, David D and others},

booktitle={MICCAI workshop: M2cai},

volume={3},

number={2014},

pages={3},

year={2014}

}